plugdata

For the past two weeks, I’ve been diving into plugdata, a modern reimagining of Pure Data, a "visual programming environment designed for sound experimentation, prototyping, and education". If you've ever been fascinated by modular synths, interactive sound design, or generative music, plugdata makes these ideas instantly accessible, so it instantly won me over.

It offers the same enjoyment for me that working with modular synthesizers (both in the physical world and via emulations like vcvrack) or the Empress Zoia always had. I love modular thinking—connecting objects, seeing the flow of information—and plugdata aligns perfectly with that mindset.

The Catalyst: A Local Workshop on Electronic Instruments

I’ve been experimenting with music, instruments, and coding for years, but two weeks ago, I stumbled upon a three-part electronic instrument workshop nearby. The first session introduced the fundamentals of sound synthesis in Max—and just like that, I was all in again.

The workshop reignited something in me—this desire to build something that exists outside of a traditional computer setup, something tangible and expressive. That led me down a rabbit hole of research into embeddable systems that could support the kind of instruments I want to build. That’s how I landed on the Electrosmith Daisy Seed, a powerful microcontroller designed for audio applications. It can host Pure Data patches (converted to C++), meaning I could eventually run my creations as standalone instruments—no laptop required. That vision became my new north star. But before I could get there, I wanted to test something simpler: how motion could shape sound in real-time.

Bringing Motion into the Equation

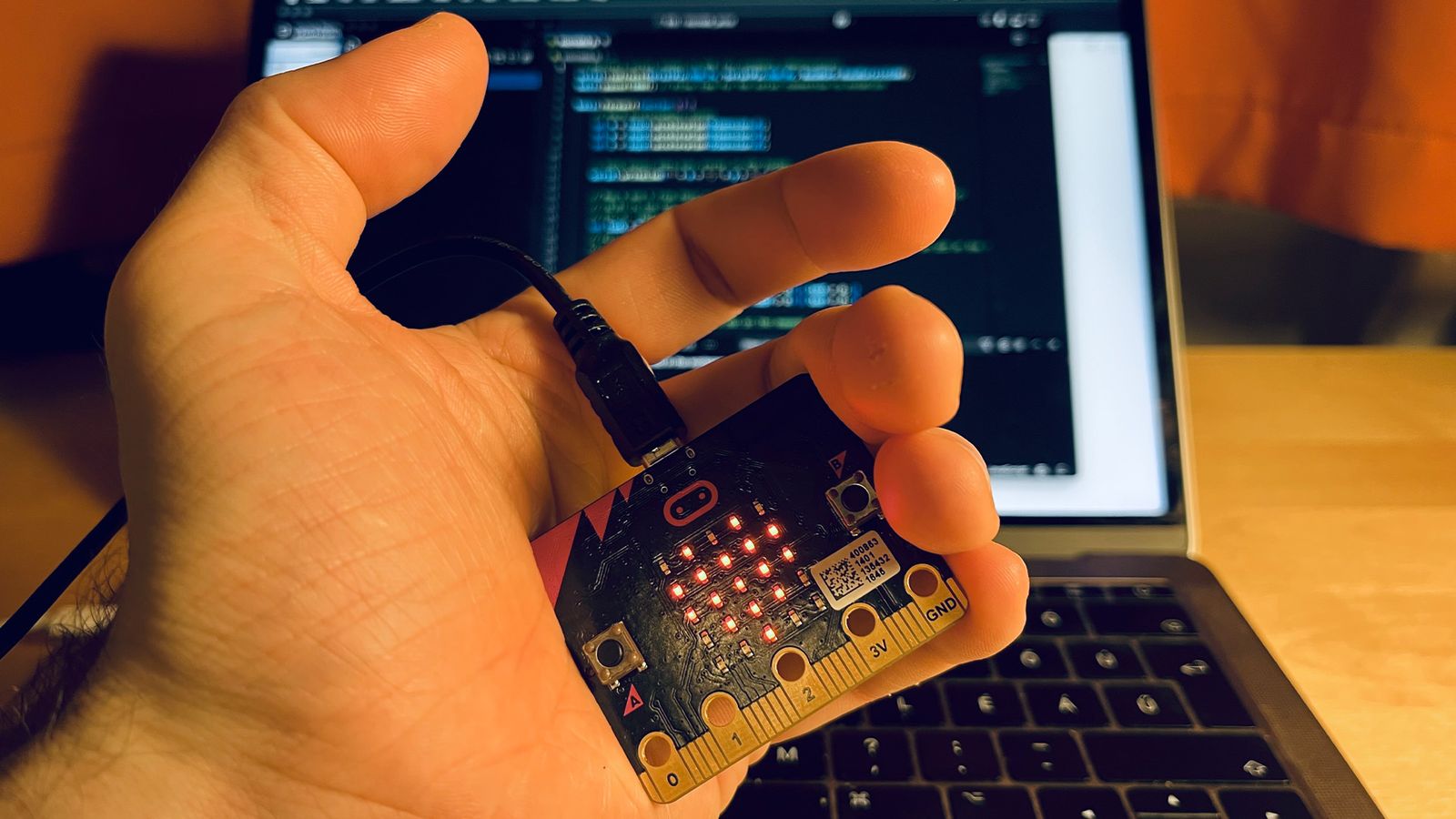

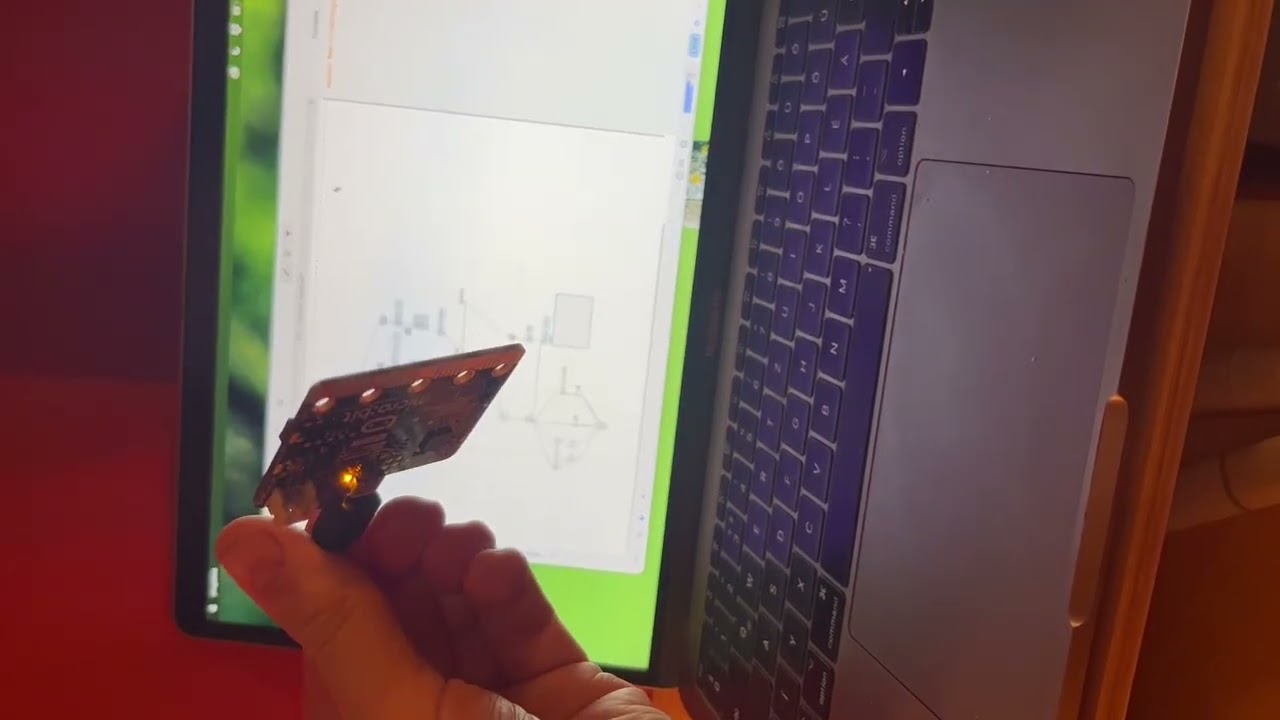

After spending hours patching in plugdata, I wondered—what if I could control sound with motion? I had an early version of a micro:bit lying around, which has a built-in accelerometer. The idea was simple: use the XYZ coordinates of the micro:bit's accelerometer to modify parameters in my patch.

To make this work, I:

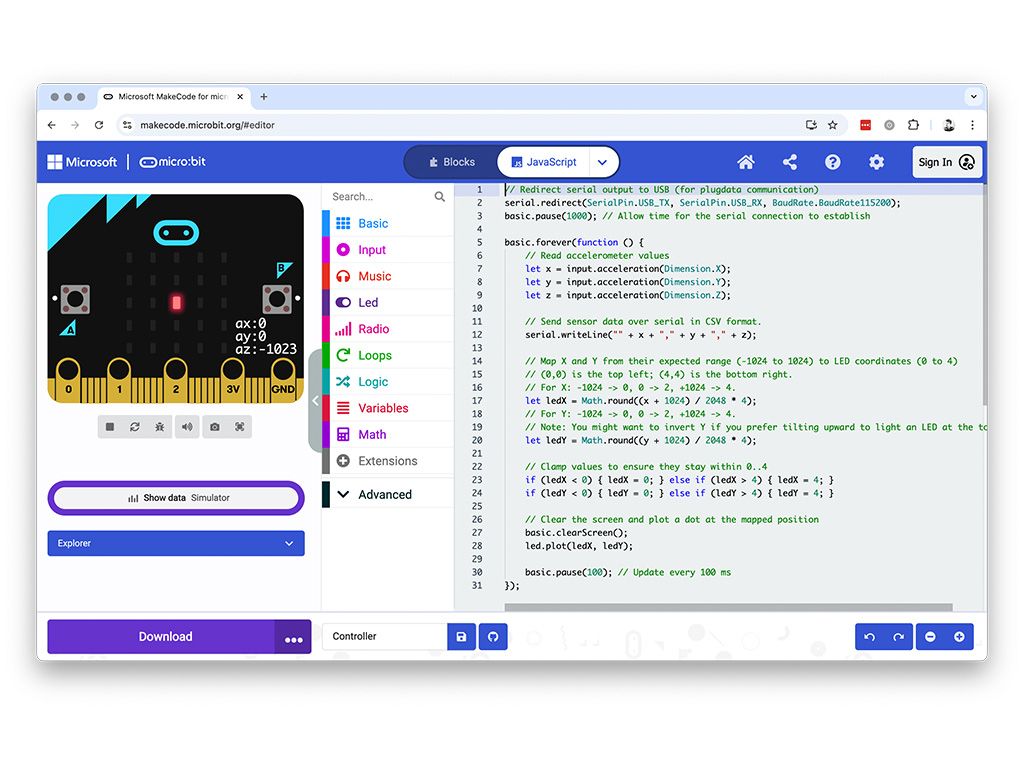

- flashed a custom JavaScript program onto the micro:bit to continuously send accelerometer data,

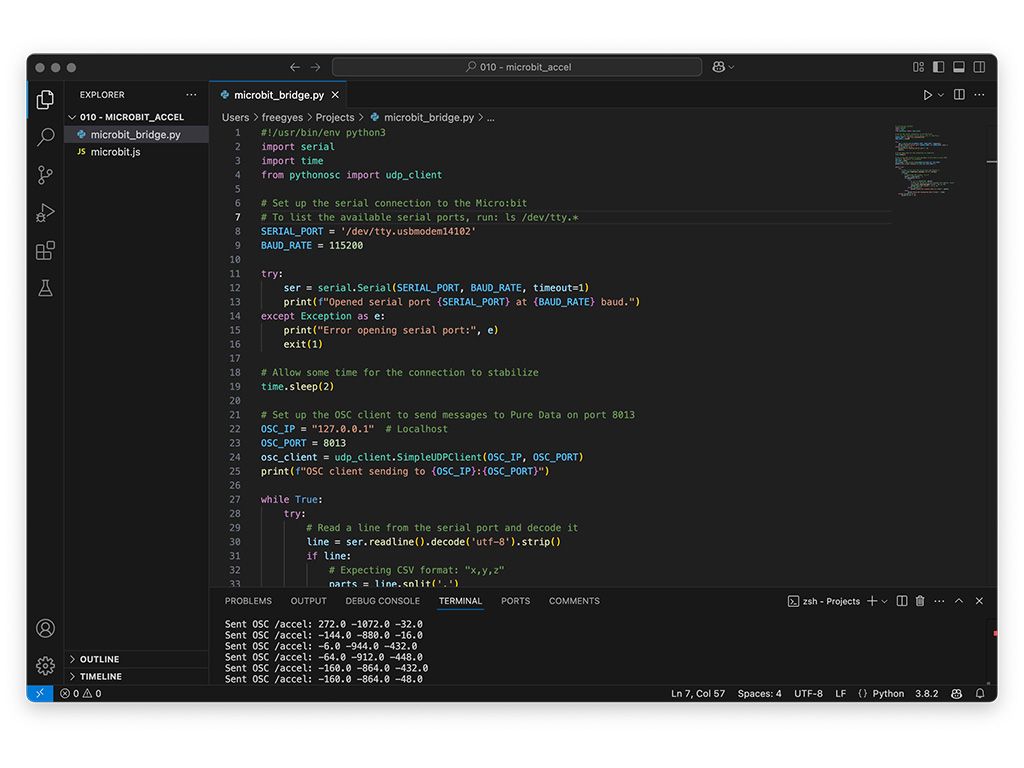

- ran a Python script using python-osc to translate motion data into a format plugdata can read, and

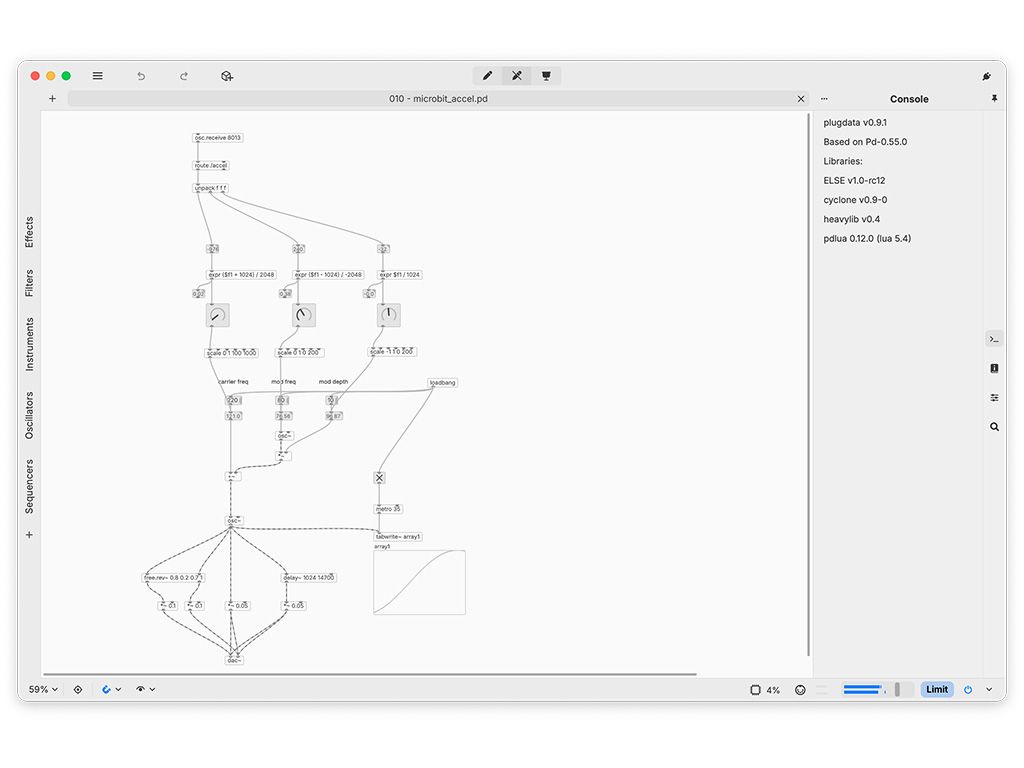

- modified my plugdata patch to receive, filter, and scale the motion data to control sound in real-time.

A Tiny Card, a Big Feeling

And then, it worked.

I lifted my hand, tilting the tiny card ever so slightly. Instantly, the sound shifted—trembling, bending, shimmering—like a living thing responding to my touch. For a moment, it felt like I was sculpting sound in mid-air. Pure magic.

It reminded me of watching Imogen Heap’s Tiny Desk performance, where she demonstrated her MiMU gloves. That performance was one of many inspirations that planted a seed in my mind years ago—of making music in a way that feels fluid, intuitive, and physically expressive.

Code & Patch Downloads

For anyone interested in taking a closer look at this setup, find my experimental code and patcher here.

Takeaways & Next Steps

This was a small experiment, but it opened up so many possibilities. What if I combine this with other sensors? What if I replace the micro:bit with a more powerful and versatile device? What if the instrument I eventually build is less about pushing buttons and more about sculpting sound in space?

This was just a small wave of my hand, but it felt like a giant step toward something bigger—an instrument that blends woodworking, sound, coding, and visuals into something truly expressive. I’m hooked.

If You’re Curious

If Max, Pure Data, or plugdata sounds like your kind of thing, Takumi Ogata’s Sound Simulator YouTube series has been my main guide so far, and I can’t recommend it enough—and if you end up experimenting with it, I’d love to hear what you create.